A legacy system that “works fine” is still costing you. Security patches stop. Developers refuse to work on it. Every new feature takes 3x longer because the codebase fights back. The question is not whether to migrate, but how to do it without breaking what already works.

Our Fight Against Super Bad Patterns in Legacy Rails Apps - RedDotRubyConf 2016

What makes legacy migration risky?

Four risks dominate every legacy migration:

- Data loss during schema changes or data transformation

- Extended downtime that disrupts business operations

- Compatibility breaks with existing integrations and APIs

- Staff resistance when teams face unfamiliar tools and patterns

Ruby on Rails mitigates these risks through Active Record migrations (version-controlled schema changes with rollback capability), incremental upgrade paths (version-by-version rather than big-bang), and a mature ecosystem of gems for every migration scenario.

How do you audit a legacy system before migration?

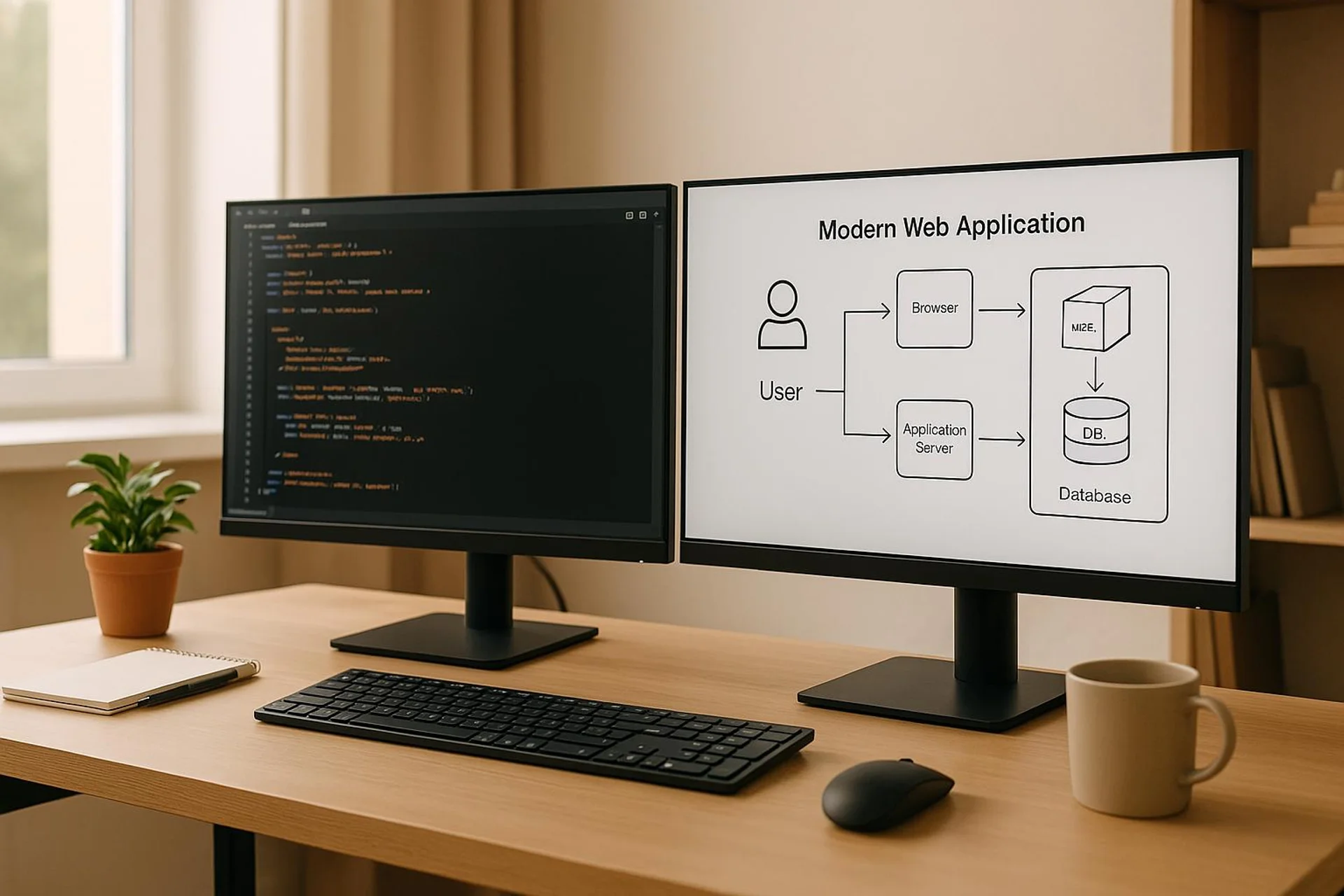

Map the full system architecture

Document everything: databases, application servers, third-party integrations, user interfaces, background jobs, cron tasks, and hidden dependencies. The goal is a complete picture before touching any code.

Codebase audit checklist:

- Identify deprecated functions, outdated patterns, and hardcoded values

- Check database queries for Active Record compatibility

- Document custom modifications and monkey patches

- List external API dependencies and their version requirements

- Profile slow queries, memory-heavy processes, and performance workarounds

Assess migration readiness

| Area | What to check | Risk level if ignored |

|---|---|---|

| Database schema | Rails naming conventions, proprietary features, stored procedures | High |

| Security | Outdated auth methods, unencrypted data, weak password policies | Critical |

| Dependencies | Abandoned gems, version conflicts, native extensions | Medium |

| Business continuity | Downtime tolerance, rollback requirements, data backup procedures | High |

| Team expertise | Rails knowledge, testing experience, deployment familiarity | Medium |

Create a migration plan

Set clear objectives: performance improvement, cost reduction, security hardening, or all three. Break the migration into milestones, starting with less critical components to test your approach.

Build a realistic timeline with buffer for unexpected issues. Migrations consistently take longer than estimated. Include rollback procedures for every milestone.

Establish stakeholder communication: who needs to know what, and when. Regular progress updates prevent surprises and maintain organizational support.

How do you upgrade Ruby and Rails versions safely?

Always upgrade sequentially. Jumping from Ruby 2.5 to 3.2 or Rails 5.2 to 7.1 in one step creates debugging nightmares because you cannot isolate which version change caused a failure.

Ruby upgrade path:

2.5 -> 2.6 -> 2.7 -> 3.0 -> 3.1 -> 3.2At each step:

- Branch your code to isolate changes

- Run

bundle updateand resolve conflicts - Fix deprecation warnings (Ruby 2.7 warns about keyword argument changes enforced in 3.0)

- Run the full test suite

- Test in a staging environment that mirrors production

- Document patches and workarounds

Rails upgrade path:

Use Rails upgrade guides for each version. They document every breaking change and required code update. Rely on Rails LTS versions when available for additional stability.

Watch your Gemfile carefully. Some gems lag in supporting newer Ruby or Rails versions. Check compatibility before each upgrade step, and find maintained alternatives for abandoned gems.

What is the safest approach to database migration?

Use Active Record migrations for schema changes

Active Record migrations provide version-controlled, reversible schema changes. Always write both up and down methods:

class SplitUserProfile < ActiveRecord::Migration[7.1]

def up

create_table :user_profiles do |t|

t.references :user, null: false, foreign_key: true

t.string :bio

t.string :avatar_url

t.timestamps

end

User.find_each do |user|

UserProfile.create!(

user_id: user.id,

bio: user.bio,

avatar_url: user.avatar_url

)

end

remove_column :users, :bio

remove_column :users, :avatar_url

end

def down

add_column :users, :bio, :string

add_column :users, :avatar_url, :string

UserProfile.find_each do |profile|

profile.user.update!(

bio: profile.bio,

avatar_url: profile.avatar_url

)

end

drop_table :user_profiles

end

endHandle legacy naming conventions

Legacy databases rarely follow Rails conventions. Use Active Record aliasing instead of renaming everything at once:

class LegacyUser < ApplicationRecord

self.table_name = "user_data"

self.primary_key = "usr_id"

endMigrate data safely

- Always back up before migrating. Test your restore procedure. A backup you cannot restore is worthless.

- Use

find_in_batchesfor large datasets to avoid memory issues and timeouts. - Log progress every few thousand records during long-running migrations.

- Separate schema changes from data transformations into individual migration files.

- Consider shadow tables for major structural changes: create the new table, populate it gradually, then switch over.

Modernize the database structure

Legacy databases accumulate problems:

- Denormalized tables that hurt query performance

- Missing indexes on frequently queried columns

- Overly broad data types (

TEXTfor short strings) - Missing

NOT NULLconstraints - Hard deletes with no audit trail

Address these incrementally. Add indexes based on slow query analysis. Tighten constraints one table at a time. Consider soft deletes (deleted_at timestamp) for audit trail requirements.

How do you break a monolith into modules?

Identify logical boundaries within the application: user management, billing, inventory, reporting. Each boundary can become a Rails engine or a standalone service.

Phased approach:

- Build new features as independent modules

- Extract shared functionality into libraries

- Replace legacy components one at a time

- Define clear data ownership per module

- Use API endpoints for inter-module communication

Rails engines let you modularize within the Rails ecosystem before committing to microservices. This is lower risk and often sufficient.

Integrate modern frontend frameworks

Set config.api_only = true for API-first development. React, Vue.js, or other frontend frameworks consume your Rails API through standardized JSON endpoints.

# Use jsonapi-serializer for consistent response format

class UserSerializer

include JSONAPI::Serializer

attributes :name, :email, :created_at

endConfigure CORS with rack-cors (restrict to trusted domains in production). Implement API versioning from the start using URL namespacing.

Modernize authentication

Legacy session-based auth does not scale well with API-first architectures. Options:

| Method | Use case | Gem |

|---|---|---|

| JWT | Stateless API auth | jwt |

| OAuth 2.0 | Third-party integrations, SSO | doorkeeper |

| MFA/TOTP | Enhanced security | devise-two-factor |

Add rate limiting with rack-attack to protect API endpoints. Implement refresh token rotation for balancing security with user convenience.

What is the test-driven approach to migration?

Before changing anything, improve test coverage. Aim for 80% minimum before starting structural changes.

Testing strategy during migration:

- Write characterization tests that capture current behavior

- Create unit tests for business logic before extracting it into service objects

- Build integration tests for critical user workflows

- Run the full test suite after every incremental change

- Add performance benchmarks to detect migration-induced slowdowns

Use parallel_tests to keep the test suite fast as it grows. Configure CI to run tests on every push and block merges on failures.

Practical Implementation: The USEO Approach

Every legacy migration starts with the same question: rewrite or refactor? In 15+ years of Ruby development, we have learned that rewrites almost always fail. Incremental refactoring with a clear end state succeeds.

On the Yousty HR portal (13-year partnership), the application has undergone multiple Rails version upgrades from early Rails versions through Rails 7. Each upgrade followed the sequential approach: one version at a time, full test suite passing before moving to the next. The most challenging upgrade was the Ruby 2.7 to 3.0 transition because keyword argument changes affected hundreds of method calls across the codebase. We automated the detection of affected call sites with a custom RuboCop cop and fixed them systematically over two weeks.

Database migrations on Yousty required special care because the HR data model encodes Swiss employment regulations that cannot tolerate data corruption. We used shadow tables for every major structural change: build the new schema alongside the old, migrate data in batches with verification, switch the application over, then drop the old tables after a 30-day observation period. Zero data loss across dozens of schema migrations over 13 years.

For Triptrade (travel MVP), the situation was different. The “legacy system” was a collection of spreadsheets and manual processes, not an old Rails app. The migration meant encoding business logic into a Rails application for the first time. We focused on getting the data model right from the start, using Active Record validations and constraints to enforce business rules that had previously existed only in people’s heads.

Migration patterns by legacy system age:

| System age | Primary challenge | Recommended approach |

|---|---|---|

| 3-5 years | Outdated dependencies, missing tests | Upgrade gems, add test coverage, refactor iteratively |

| 5-10 years | Multiple Rails version gap, architectural debt | Sequential version upgrades, extract service objects |

| 10-15 years | Fundamental architecture mismatch | Strangler fig pattern with parallel operation |

| 15+ years | Technology stack no longer supported | Phased rewrite with data migration, preserving business rules |

Common migration mistakes we have seen:

- Big-bang rewrites that take 18 months, miss the original deadline, and deliver a system that has fewer features than what it replaced

- Skipping the audit and discovering critical undocumented behavior mid-migration

- Upgrading Ruby and Rails simultaneously instead of sequentially

- Insufficient testing before structural changes, leading to regressions that erode team confidence

- No rollback plan for database migrations, turning recoverable errors into emergencies

The most reliable migration pattern is boring: audit thoroughly, upgrade one version at a time, test exhaustively, communicate constantly. There are no shortcuts, but there is a predictable path to a modern, maintainable application.

FAQs

What are the biggest risks when migrating legacy systems, and how do you mitigate them?

Data loss, extended downtime, compatibility breaks with existing integrations, and staff resistance. Mitigate with thorough audits before starting, phased migration (never big-bang), tested rollback procedures for every step, encryption during data transfer, and pilot migrations to catch issues early. Keep the old system running in parallel until the new system is proven stable.

Why is Ruby on Rails effective for legacy system migration?

Active Record migrations provide version-controlled, reversible database changes. The sequential upgrade path (one version at a time) reduces risk. The gem ecosystem includes tools for every migration scenario: test frameworks, background job processors, API serializers, and authentication libraries. Rails conventions enforce consistency, which is exactly what legacy code lacks.

How do you ensure data integrity during a legacy database migration?

Back up before every migration and verify the restore procedure works. Use find_in_batches for large datasets. Separate schema changes from data transformations into individual migration files. Add foreign key constraints only after cleaning up orphaned records. Use shadow tables for major structural changes. Log progress during long-running migrations and run data verification queries after each step.